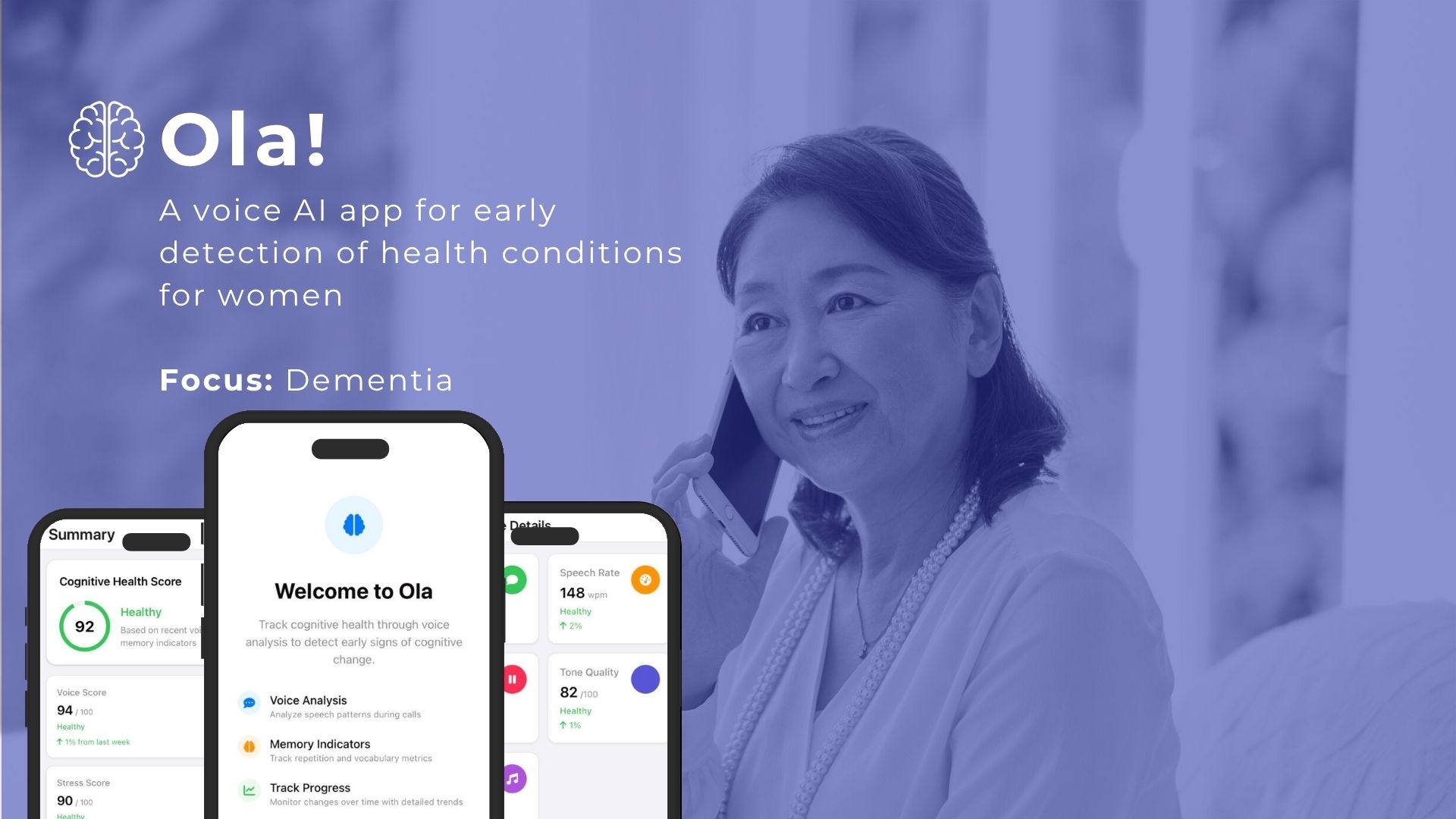

Ola!

Voice AI · Women's Health · MIT AI Hackathon Winner · 2025

70% of Alzheimer's patients are women, yet cognitive screening is almost universally designed for elderly patients in clinical settings. Symptoms in middle-aged women are dismissed as stress or menopause. Formal diagnosis comes 10 to 15 years after the first signs appear. There is no product designed for the decade before the diagnosis.

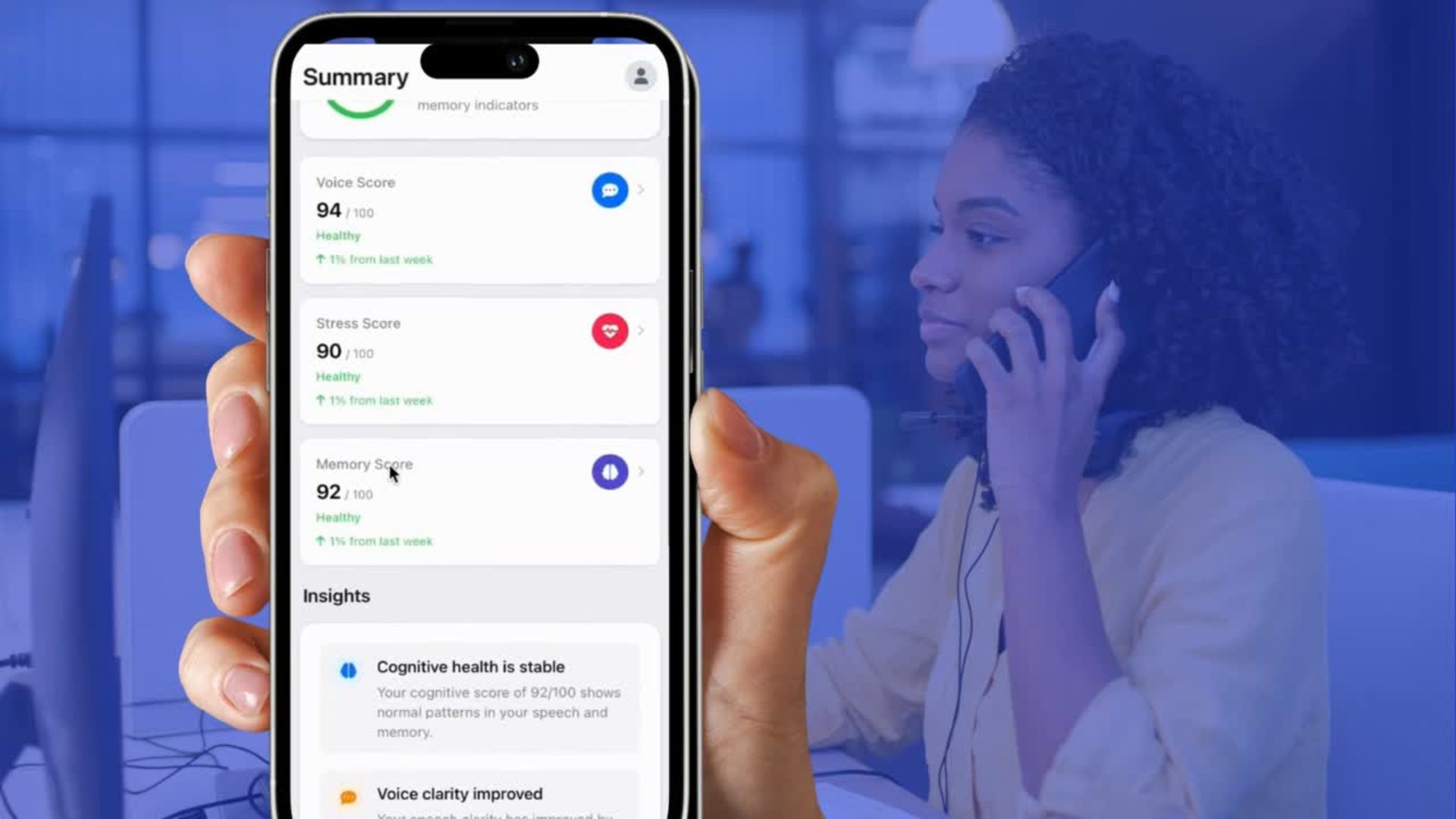

The core product decision was to make detection entirely passive. Ola! runs in the background of normal phone calls, extracting six speech biomarkers correlated with early cognitive change: speech rate, pitch variation, pause frequency, filler word density, vocabulary richness, and tonal consistency.

The critical design constraint was that this user is not a patient yet. She is a capable, busy professional who is worried but not alarmed. Every interface decision reinforced agency rather than anxiety. Scores are framed as trends, not diagnoses. The weekly report appears and disappears. The product deliberately does not encourage daily engagement - that would create exactly the anxiety spiral we were designing against.

Onboarding was reduced to one permission screen. The app explains what it listens for, then disappears. The value is in the periodic report, not the app itself.